How to Design for Human-agent Interaction

- Get link

- X

- Other Apps

How to Design for Human-agent InteractionUnreliable AI products are a design problem. Here's how to solve it. Karri Saarinen has spent his career—at Airbnb and Coinbase, and now as CEO of Linear—crafting software that keeps its promises. His argument is that AI's unpredictability isn't a model problem, it's an interface one: An agent sends a customer an email you meant to review first. The model did what it was told, but the interface never gave you a chance to stay stop. In this piece, he shares the six-principle framework Linear has developed for how agents and humans should work together inside the same product, plus his nuanced take on a thorny question in AI design: Who should be accountable when an agent does something wrong? If you enjoy the piece, watch his episode on X or YouTube, or listen on Spotify or Apple Podcasts.—Kate Lee Was this newsletter forwarded to you? Sign up to get it in your inbox. I learned to design in a world where product design was a promise. It was a promise that a product would work how it's supposed to work. You sketch a user flow on a whiteboard, build it, and the system behaves the way you made it behave. A button does exactly what it says it will do, every time, and if it doesn't, that's a bug. This shaped my approach as a principal designer at Airbnb and Coinbase, and now as the CEO of Linear. Lately I've been spending time with a different kind of tool, and that promise has grown harder to keep. I ask for help writing a plan, summarizing a discussion, and turning rough notes into something clearer. Sometimes the result is excellent, but small changes to my input shift the output in ways I didn't expect. The capability is impressive when it works, but the experience often feels slippery. I'm not always sure what I'll get back, or how much I should trust it. Non-deterministic software breaks the contract. When outcomes can vary, sometimes wildly, based on what someone types into the same chat window, designing for reliability becomes genuinely harder. This slippery feeling is the design problem of this era, and it almost always traces back to the interface rather than the language model—which means it belongs to designers, not researchers. Would you stake an enterprise business decision on what your AI just told you?Most teams can't because AI outputs don't carry the judgment of the experts who actually know the domain. Dialect from Scale AI changes that.

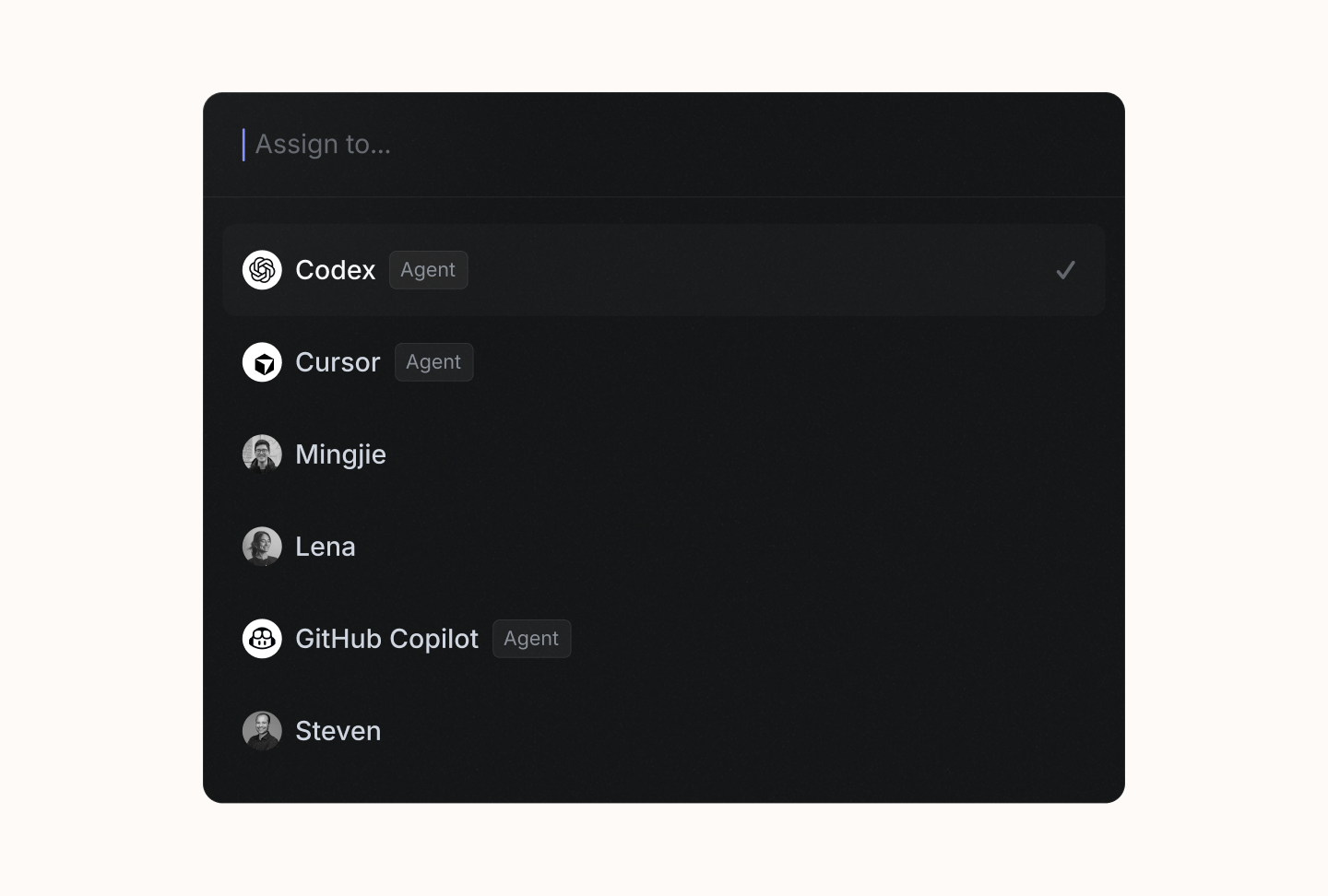

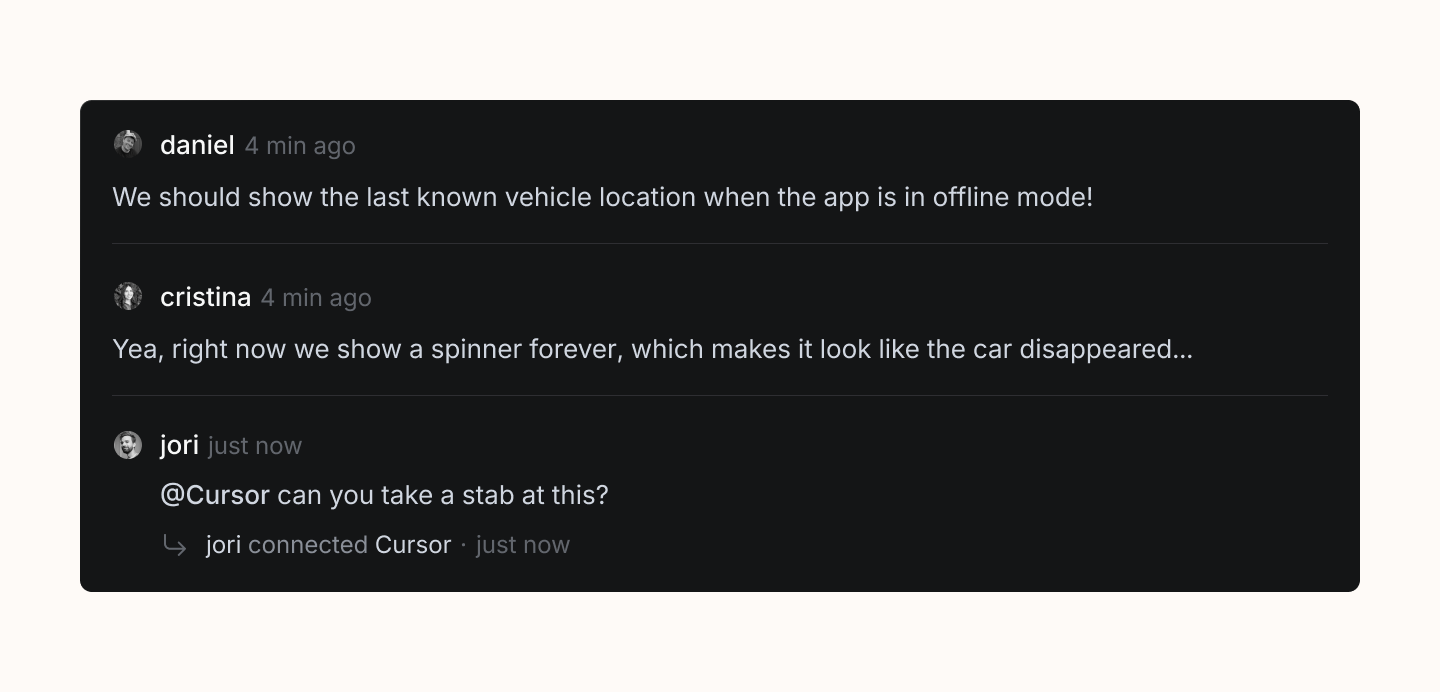

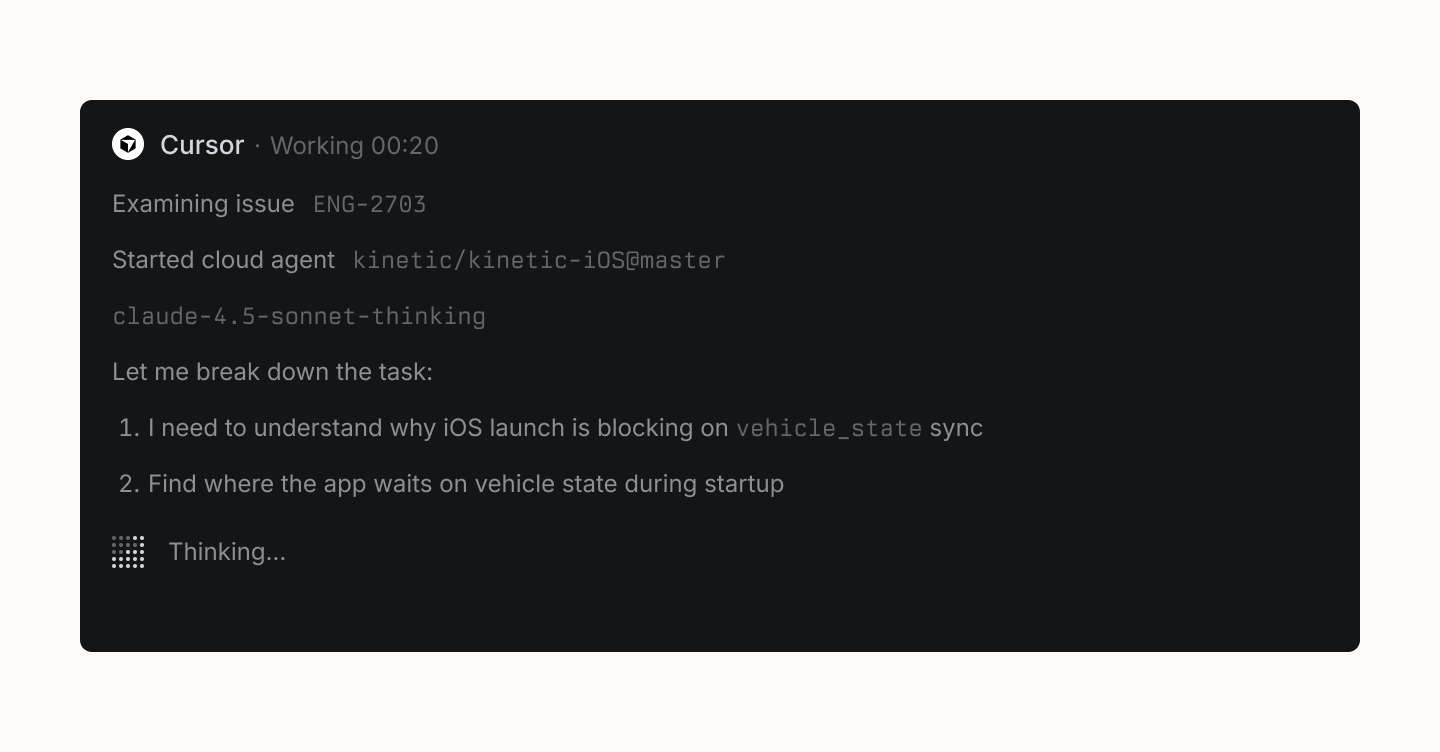

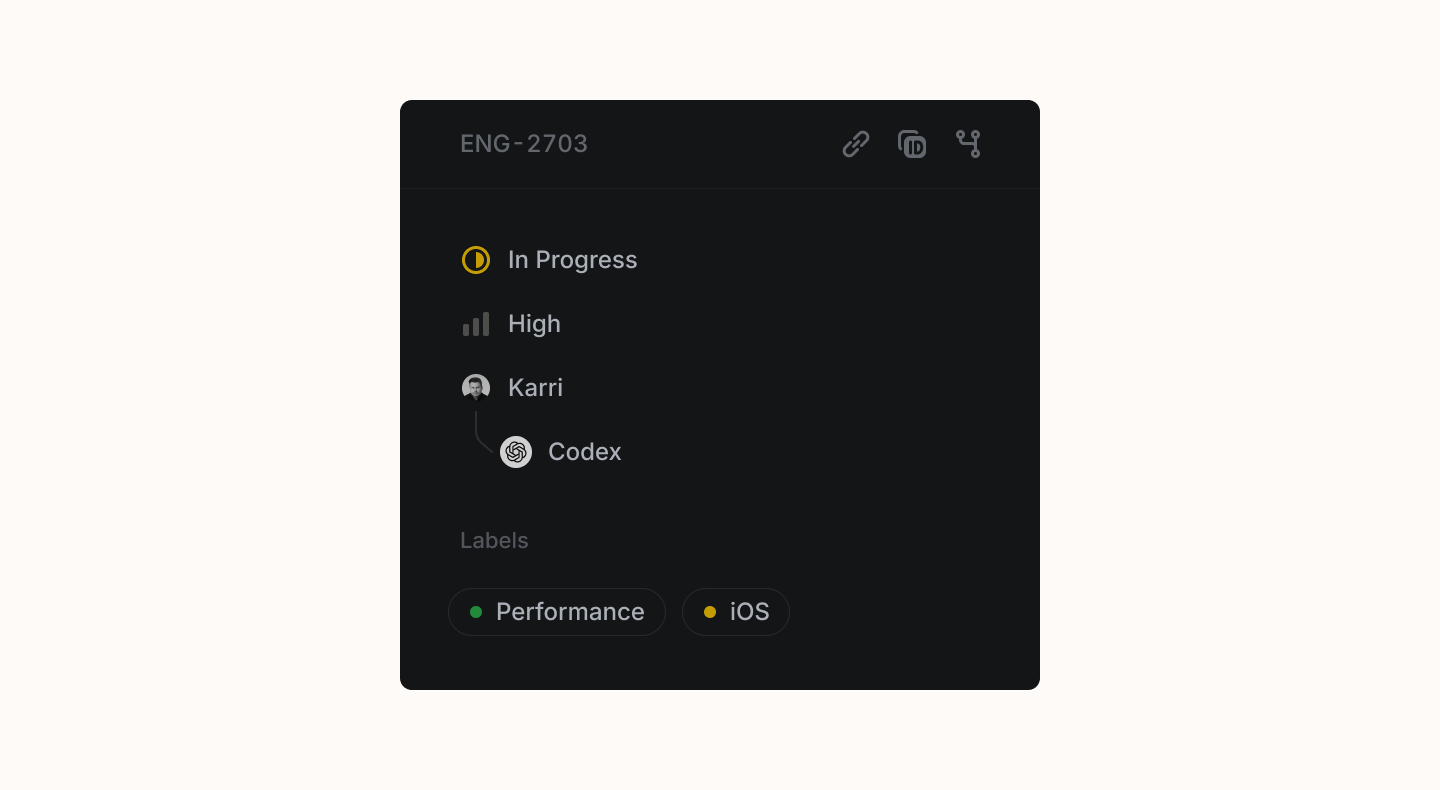

The limits of chatThe first interface that spread for AI tools was the chat window. That makes sense. When you don't know what something can do, the safest approach is to let people ask. A conversation feels familiar, it stretches across many situations, and it doesn't force a specific structure up front. But the more you use chat for real work, the more its weakness shows. Everything becomes a stream of text that's hard to hold onto, hard to compare, and hard to connect to the rest of what you're doing. The quality of the output depends enormously on the quality of the input, which means two people asking for the same thing in slightly different ways can get drastically different results. There are few guardrails, and little structure nudging you toward a good outcome. The interface is essentially a blank page with a blinking cursor, and all the burden of getting value from it falls on the person typing. For exploration, that's fine. For serious, repeated work inside a team, it's not enough. We need interfaces that bring more structure to AI interactions, that guide people (and agents) toward better outcomes without being so brittle they break the moment someone wants to use them in a way you hadn't anticipated. Designing for new actorsThere's a second, newer dimension to this problem that goes beyond improving interfaces for humans. Agents are already showing up inside products, working alongside people, and most software wasn't designed with that in mind. For decades, interfaces have been designed so that humans can navigate them—buttons, menus, folders, navigation hierarchies. These patterns assume a person is looking at a screen, making decisions, and clicking through options. But when an agent is interacting with a product, the design challenge changes. The agent doesn't need a menu to find something. It doesn't browse. It acts, and the people around it need to understand what it did and why, often after the fact. We need a new set of principles for how agents show up inside the tools people already use. Not principles for building agents themselves, but principles for designing ways that agents and humans interact within a shared product. At Linear, we've started calling these Agent Interaction Guidelines, and while they're still evolving, they represent how we think about this problem today. An agent should always disclose that it's an agentWhen humans and agents work side by side, people need instant certainty about who they're interacting with. This sounds obvious, but it's easy to get wrong. The agent has to signal its identity clearly enough that it can never be mistaken for a person, even in passing, even on a quick scan of a busy activity feed. An agent should inhabit the platform nativelyAgents should work through the same patterns and actions that humans use. If a person changes an issue's status or links a pull request, the agent should do it the same way, in the same place, with the same visual language. This makes the agent's work legible without anyone learning a new mental model. You already know how to read what happened, because the interface is the same one you've used all along. An agent should provide instant feedbackSilence from an agent creates the same anxiety as silence from a colleague you've just asked for help. When invoked, an agent should provide immediate (but unobtrusive) feedback so the person knows their request was received. The details can come later. An agent should be transparent about its internal stateMore broadly, people need to understand, at a glance, whether an agent is thinking, waiting for input, executing a task, or finished with that task. And when they want to go deeper, they should be able to inspect the agent's reasoning, the tools and systems it used, and its decision logic. This separates a product you can trust from one that feels like a black box. Transparency makes speed feel safe. An agent should respect requests to disengageWhen asked to stop, an agent should stop immediately and stay stopped until it receives a clear signal to re-engage. This one feels simple, but it matters more than you'd think. An agent that keeps going after being told to stop, or that re-engages unprompted, erodes trust faster than one that makes mistakes. People need to feel that they're in control of the interaction, not the other way around. An agent cannot be held accountableI think about this principle most. The instinct to put a human in the loop is understandable, but taken literally, it can mean a person approving every step before anything moves forward. The human becomes a bottleneck, rubber-stamping work rather than directing it, and you lose much of what makes agents valuable in the first place. The more important work happens before the agent even starts. An agent operating inside a well-designed system already has the context and constraints it needs to do good work. In Linear, that means project plans, issue backlogs, code, and documentation. These all shape what the agent does and how it does it. When you delegate an issue to an agent in Linear, the delegation is visible. There's a person who set the agent loose within that system, and that person is accountable for the outcome. You design the environment well, you let the agent run, and you own what it produces. A working frameworkWe've honed these principles through what we've learned building agents into Linear over the past year, and we expect them to keep evolving as the technology and the patterns mature. The design language for human-agent collaboration is still being written, by us and by everyone else building in this space. I feel confident, though, that the slippery feeling people associate with AI products is a solvable problem, and the solution looks more like thoughtful interface design than better models. The models will keep improving on their own. The harder work is building the structure around them so that their output feels reliable, legible, and trustworthy. That's the design challenge on which to focus. And the reward for getting it right is that, over time, you can hand agents more and more of the work that doesn't need you, and spend your attention on the work that does. Learn more about how Saarinen runs Linear and what he thinks of the "SaaS is dead" narrative. Watch his episode on X or YouTube, or listen on Spotify or Apple Podcasts. Karri Saarinen is the cofounder and CEO of Linear. You can follow him on X at @karrisaarinen. We build AI tools for readers like you. Write brilliantly with Spiral. Organize files automatically with Sparkle. Deliver yourself from email with Cora. Dictate effortlessly with Monologue. Collaborate with agents on documents with Proof. For sponsorship opportunities, reach out to sponsorships@every.to. Get More Out Of Your SubscriptionTry our AI tools for ultimate productivity  Front-row access to the future of AI Front-row access to the future of AI  In-depth reviews of new models on release day In-depth reviews of new models on release day  Playbooks and guides for putting AI to work Playbooks and guides for putting AI to work  Prompts and use cases for builders Prompts and use cases for builders  Bundle of AI software Bundle of AI software |

- Get link

- X

- Other Apps